Long-running LLM agents do not fail because their models are wrong. They fail because they forget — or because they try to remember everything at once and collapse under token costs that scale with conversation length. The architectural answer is not a bigger context window. It is a memory hierarchy that treats agent state the same way an operating system treats RAM: tiered, demand-paged, and governed by explicit eviction policy.

The memory problem long-running LLM agents actually hit

Bottom Line: Flat vector recall — stuffing all prior context into either the prompt or a single vector retrieval call — cannot deliver continuity across multi-session agents at production scale. It either exhausts the context window and drives token cost up with session length, or it retrieves semantically adjacent but contextually wrong memories that silently corrupt the agent's reasoning. Hierarchical memory solves both problems by separating what the agent needs right now from what the agent might need from what the agent should remember forever, and by loading each layer on demand rather than wholesale.

The dominant pattern in agentic workflow state management today is one of two failure modes: the agent carries its entire conversation history in the prompt (burning tokens at every step), or it discards history between sessions and treats each invocation as stateless. Neither scales.

The Mem0 research team quantified this precisely. On the LOCOMO benchmark, their hierarchical memory architecture reduced p95 latency by over 91% compared to full-context baselines, and cut token costs by more than 90%, while simultaneously improving answer quality — 5% relative gain on single-hop questions, 11% on temporal questions, and 7% on multi-hop questions over best-in-class baselines per question type. The authors frame the core problem directly: "Large Language Models have demonstrated remarkable prowess in generating contextually coherent responses, yet their fixed context windows pose fundamental challenges for maintaining consistency over prolonged multi-session dialogues."

Flat RAG architecture, the other common approach, substitutes retrieval for memory but does not constitute memory. A vector similarity search retrieves documents that are semantically close to the current query — it does not preserve task state, goal hierarchies, intermediate plans, or the temporal ordering of user commitments. An agent that only does RAG will retrieve the right facts and still hallucinate a plan, because plan state is not a document; it is a structured object that must survive between turns.

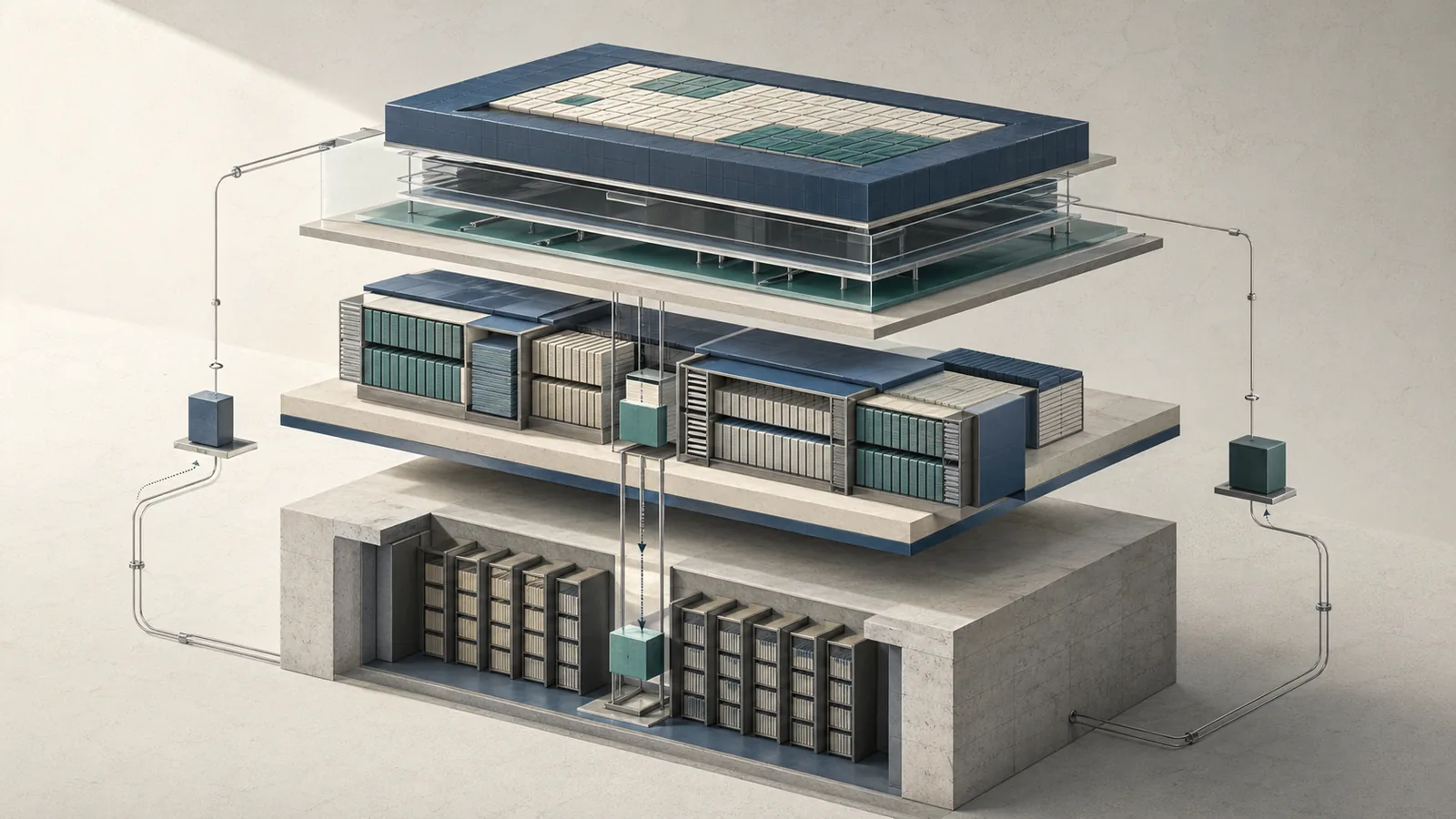

Why the context window behaves like L1 cache, not a database

The context window is the highest-bandwidth, highest-cost, shortest-lived storage in the entire agent memory stack. Every token in it is re-processed at every inference step. That is the defining property of L1 cache: it is fast, expensive, and small relative to the total information the system needs. Current models like GPT-5.4 support up to 1.05 million tokens, but that scale does not make the context window a database. It makes the cost problem worse once prompts exceed the documented pricing threshold in the GPT-5.4 API documentation, where higher-rate billing applies to very large prompts for the session.

A database persists passively between reads. The context window must be explicitly constructed on every call. The architectural implication is that content in the context window should be treated like cache lines — loaded precisely because the current task needs them, evicted as soon as they are no longer hot, and never held just because they might be useful later.

The competitive gap in most agent memory discussions is this conflation: teams treat their vector database as a memory system, when in fact it maps to L3 archival storage. The full hierarchy is three tiers:

flowchart LR

subgraph L1["L1 · Prompt Context (Hot)"]

A["Active instructions\nCurrent turn input\nTool call results (ephemeral)\nLatest user message"]

end

subgraph L2["L2 · Working Memory (Warm)"]

B["Task plan & goal state\nSession scratchpad\nPending sub-tasks\nRecent turn summaries"]

end

subgraph L3["L3 · Archival Retrieval (Cold)"]

C["Vector store\n(pgvector / Pinecone / FAISS)\nLong-term facts\nUser preference history\nKnowledge archives"]

end

L3 -- "demand page into L2 on retrieval hit" --> L2

L2 -- "promote hot state into L1 on task start" --> L1

L1 -- "demote completed state to L2 on turn end" --> L2

L2 -- "evict cold state to L3 on compaction" --> L3

Each tier maps to a distinct storage backend, latency profile, and cost model. The boundaries between tiers are not fixed — content moves across them under explicit promotion and eviction rules, exactly as a hardware cache controller manages cache lines.

L1: Immediate prompt context and why it is the most expensive layer

L1 holds only what the model must attend to in the current inference call: the system prompt, the active user message, the output schemas for current tools, and the immediate results of the most recent tool invocations. Everything else is overhead.

The cost pressure at L1 is nonlinear in the sense that larger prompts increase total spend and latency for every call. Under GPT-5.4 pricing, once a session crosses the documented large-prompt threshold, the higher-rate tier applies for that session; that is enough to make poor L1 hygiene expensive without pretending there is an official percentage-of-spend metric for prompt context.

Pro Tip: Ephemeral tool output — the raw JSON from an API call, a scraped HTML body, an intermediate code execution result — must never persist in L1 across turns. Extract the conclusions from tool output, write them to L2 as structured state, and drop the raw payload before the next inference. A single search result page left in context across ten turns costs more than ten fresh retrievals from L3.

Agentic workflow state management at L1 means treating the prompt as a register file, not a log. What the model needs to act belongs there; what it needs to remember belongs in L2 or L3.

L2: Short-term working memory for task state and scratchpad continuity

L2 holds the agent's active task graph: the decomposed plan, intermediate conclusions, completed sub-tasks, and the current goal hierarchy. This is the scratchpad that persists across turns within a session but does not need to be re-processed from scratch on every call. It is warm memory — not in the prompt by default, but loaded into L1 at the start of each turn and flushed back after.

The design challenge at L2 is compaction. An agent that appends every assistant response and tool result to a growing L2 buffer will exhaust its working memory budget within a few dozen turns. The correct behavior is progressive summarization: when L2 reaches a configured token threshold, the agent issues a compaction pass — a targeted LLM call that condenses the scratchpad into a structured state object representing goals, decisions made, and open sub-tasks.

Production Note: Trigger L2 compaction when working memory approaches your configured budget rather than waiting for overflow. The exact threshold is authorial engineering guidance, not a verified benchmark; a practical starting range is 60–70% of session token budget if your compaction model and backend can tolerate the extra call. Compacting early preserves fidelity, and LangGraph's checkpoint mechanism provides a natural compaction hook: store the compacted state dict as the checkpoint payload, not the full message history.

Mem0's architecture confirms the value of this approach: their method's over 91% lower p95 latency versus full-context baselines is largely attributable to not re-ingesting full history on every call — the functional analog of keeping L2 compact and loading only relevant state into L1.

L3: Long-term retrieval from vector stores and knowledge archives

L3 is archival storage — facts, preferences, historical decisions, and knowledge that the agent should be able to recall but does not need in every prompt. Vector databases including pgvector, Pinecone, and FAISS are the canonical backends. Retrieval from L3 is triggered on demand, not preloaded — the agent issues an embedding query when it detects a knowledge gap, and the result is loaded into L1 for the current turn.

The critical architectural point is that L3 is not a substitute for L2. A vector store can tell an agent what a user prefers; it cannot tell the agent what sub-task it was in the middle of executing. State and knowledge are different objects with different retrieval semantics.

Limitation Note: The verified sources in this article do not provide a controlled benchmark that compares pgvector, Pinecone, or FAISS directly against in-context state storage under the same task. The supported claim is narrower: archival retrieval is suitable for persistent facts and preferences, while short-term task state belongs in a structured working store.

| Dimension | Archival Recall (L3) | Semantic Search (L3 variant) | State Storage (L2) |

|---|---|---|---|

| Backend | Vector DB (Pinecone, pgvector) | Embedding + reranker | Structured store (Redis, Postgres) |

| Query type | ANN similarity | Semantic relevance | Key-value or graph lookup |

| Latency | 10–100 ms | 50–300 ms (with reranker) | < 5 ms |

| Content type | Facts, preferences, documents | Passages, conversation chunks | Goals, plans, task state |

| Session scope | Persistent across sessions | Persistent across sessions | Single session or short-term |

| Evictable? | Yes, with TTL or semantic eviction | Yes | Yes, on task completion |

Mem0's evaluation on LOCOMO demonstrates that an L3-backed retrieval layer, properly integrated, improves factual quality on multi-hop and temporal questions while keeping latency well below full-context approaches — because only the retrieved facts enter the prompt, not the entire history.

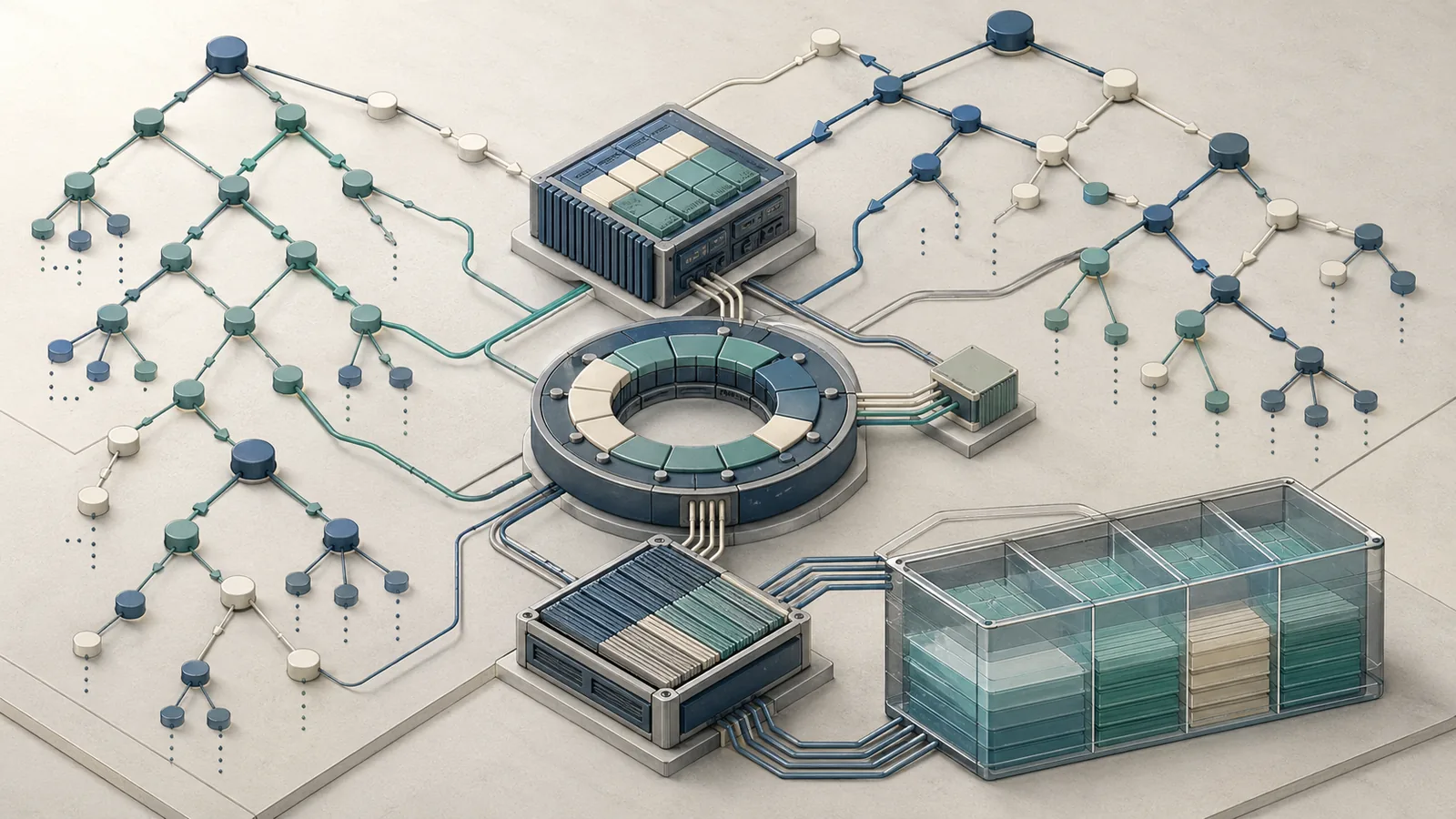

Demand paging for agent memory: what gets loaded, what gets evicted, what gets rebuilt

Demand paging in operating systems loads a memory page into RAM only when a process faults on it — not proactively. Agent memory should work the same way. The agent does not preload all user history into L1 at session start. It starts with a minimal L1 (system prompt, current message, active task state from L2), and triggers retrieval from L3 only when it detects a gap.

The Mem0 architecture operationalizes this directly: dynamic extraction, consolidation, and retrieval of salient memories across sessions, activated by the current task context rather than by time or position in history. Their results — over 90% token-cost savings versus full-context methods — are the empirical case for demand paging over wholesale context loading.

A concrete policy specification for a three-tier paging system, presented here as authorial guidance rather than a verified benchmark, looks like this:

memory_paging_policy:

l1_prompt_budget_tokens: 32000

l2_working_memory_budget_tokens: 8000

l2_compaction_trigger:

threshold_pct: 0.65 # authorial default, not a verified benchmark

compaction_model: "gpt-4.1-mini"

output_format: "structured_state_json"

l3_retrieval:

trigger: "page_fault" # only retrieve when agent signals missing context

top_k: 5

rerank: true

min_score_threshold: 0.72 # authorial default, not a verified benchmark

promotion_rules:

l3_to_l2: "on retrieval hit with score >= 0.85 AND recency < 7 days"

l2_to_l1: "on task start, load active goal + last 3 compacted states"

eviction_rules:

l1_eviction: "end of turn — demote all non-sticky content to L2"

l2_eviction: "on compaction — move completed sub-tasks to L3 archive"

l3_eviction: "LRU with semantic override — see eviction policy section"

The key design decision in this spec is that retrieval from L3 is pull-only. The agent explicitly signals a page fault — a missing fact, a missing state object — and the memory controller loads it. This prevents speculative loading that fills L1 with context that never gets attended to.

Page faults in agent terms: missing facts, missing state, and missing plans

An agent page fault occurs when the model's response signals a knowledge gap that should have been resolvable from memory. Three categories exist: missing facts (the agent does not know a user's stated preference), missing state (the agent does not know where it was in a multi-step task), and missing plans (the agent cannot reconstruct its goal hierarchy after a session gap).

Detecting page faults in production requires instrumentation, not hope. A practical pattern is to have the agent emit a structured signal — for example, a retrieve_memory tool call — when it detects uncertainty about a fact it believes it should know. That signal is the page fault; the retrieval call is the page load.

Watch Out: Do not confuse retrieval miss rate with true memory loss. A retrieval miss (L3 returns no result above the similarity threshold) means the fact was never stored, or was stored under an incompatible embedding. True memory loss means the fact was stored and should have been retrieved but was not — a precision/recall failure in the vector index. Conflating the two leads to over-provisioning L3 storage when the actual problem is embedding quality or chunk granularity.

Mem0's LOCOMO results — 5% improvement on single-hop, 11% on temporal, 7% on multi-hop questions — represent the measurable benefit of correctly handling page faults for each question type: single-hop faults are simple fact retrievals, temporal faults require time-ordered state, and multi-hop faults require chained retrievals across L2 and L3.

Eviction policies: LRU, LFU, and semantic eviction compared

No single eviction policy dominates all agent memory regimes. The choice depends on access pattern, content type, and whether temporal recency or semantic relevance predicts future utility.

| Policy | Trigger | Benefit | Failure mode | Best fit |

|---|---|---|---|---|

| LRU (Least Recently Used) | Time since last access | Simple to implement; handles bursty access well | Evicts rare but critical facts that haven't been accessed recently | Session scratchpad; short-horizon agents |

| LFU (Least Frequently Used) | Access count over window | Retains frequently referenced preferences and facts | Newly added critical facts are vulnerable before they accumulate hits | User preference stores; domain knowledge archives |

| Semantic Eviction | Embedding distance to current task context | Retains contextually relevant memories regardless of access pattern | Computationally expensive; requires embedding the current task state on each eviction pass | Long-horizon research agents; multi-domain task agents |

Engineering guidance, not a verified benchmark conclusion: LRU is often the pragmatic default for L2 working memory because task state access is temporally clustered — you need the last few plan steps, rarely the ones from ten turns ago. LFU is more appropriate for L3 preference stores where a user's standing preferences should survive long gaps between sessions. Semantic eviction is the strongest policy for L3 archival retrieval in agents with variable task domains, but the embedding cost of re-scoring every candidate on each eviction pass is non-trivial at scale; batch the eviction pass rather than running it per-turn.

Mem0's consolidation mechanism — extracting and merging salient memories rather than storing raw conversation chunks — implicitly functions as a semantic eviction filter at write time. Facts that are not salient enough to survive extraction are never written to L3, which is a form of pre-eviction at ingest.

Promotion and demotion rules across memory tiers

Content moves up the hierarchy (toward L1) when the current task context assigns it high utility, and down (toward L3) when it becomes cold. Defining promotion and demotion mathematically prevents ad-hoc decisions that corrupt agent continuity.

A utility function for promotion scoring:

$(U(m, t) = \alpha \cdot \text{sem_sim}(m, q_t) + \beta \cdot \text{recency}(m, t) + \gamma \cdot \text{freq}(m, t))$

Where: - $m$ is a memory record - (q_t) is the embedding of the current task context at time $t$ - (\text{sem_sim}) is cosine similarity between the memory embedding and the task context - (\text{recency}(m, t) = e^{-\lambda(t - t_m)}) — exponential decay since last access - (\text{freq}(m, t)) is normalized access frequency over a rolling window - (\alpha, \beta, \gamma) are weights summing to 1 (typical starting point: 0.5, 0.3, 0.2)

Records with (U > \theta_{promote}) (for example, 0.75) are candidates for promotion from L3 to L2 at the start of the next session. Records with (U < \theta_{demote}) (for example, 0.25) after a compaction pass are evicted from L2 to L3. Those thresholds are illustrative design defaults, not verified benchmark cutoffs.

The RAG architecture connection: when a record is loaded from L3 via vector retrieval, its (\text{recency}) and (\text{freq}) terms should be updated in the L3 metadata store — not just the embedding index. This metadata update is what enables LFU and recency-weighted eviction at L3; pure vector databases that store only embeddings and payload cannot implement this without a parallel metadata table (e.g., a pgvector table with an accessed_at column and an access_count integer).

How the hierarchy changes token cost, latency, and answer continuity

The empirical case for hierarchical memory is anchored in Mem0's LOCOMO evaluation. A full-context baseline — carrying the entire conversation history in the prompt — achieves the highest theoretical completeness but pays for it with latency and cost that scale with conversation length. Mem0's hierarchical approach cuts p95 latency by over 91% and token cost by more than 90% while improving answer quality on three of four question categories.

| Dimension | Full-context baseline | Flat RAG | Hierarchical memory (Mem0-style) | Verified status |

|---|---|---|---|---|

| Token cost per turn | O(session length) | O(retrieval chunk size) | O(L1 budget) — bounded | Verified as qualitative scaling behavior |

| p95 latency | Scales with total tokens | Moderate (retrieval + generation) | >91% lower than full-context (LOCOMO) | Verified on LOCOMO for Mem0-style system |

| Single-hop QA accuracy | Baseline | Near-baseline | +5% relative (LOCOMO) | Verified on LOCOMO |

| Temporal QA accuracy | Baseline | Degrades without temporal ordering | +11% relative (LOCOMO) | Verified on LOCOMO |

| Multi-hop QA accuracy | Baseline | Degrades without state chaining | +7% relative (LOCOMO) | Verified on LOCOMO |

| Session continuity | High (all history present) | Low (stateless between sessions) | High (L2 preserves state; L3 preserves facts) | Supported by architecture, not a direct benchmark metric |

| Retrieval overhead | Not applicable | Retrieval + rerank required | Present, but no direct verified measurement in the cited sources | Limitation: no direct retrieval-overhead number in the sources |

The retrieval overhead of L3 queries — the latency cost of embedding and ANN search — is one dimension where hierarchical memory can pay a per-turn cost relative to a single small prompt. For a single-turn query where all relevant history fits comfortably in the context window, flat context can be faster. The hierarchy earns its cost at session 5, 10, or 50 — where full-context latency has grown proportionally and the retrieval overhead is a fixed per-turn constant.

Where flat RAG wins and where it fails

Flat RAG — embed the query, retrieve top-k documents, stuff into prompt, generate — is a practical architecture for a specific regime: single-session, low-stakes, stateless question-answering over a fixed document corpus.

It fails the moment the task requires any of the following: tracking what was decided in a prior turn, maintaining a goal hierarchy across sessions, updating a belief based on new information from the user, or correlating facts across multiple retrieved documents in a temporal sequence. These are state operations, and RAG has no state.

Pro Tip: Flat RAG is sufficient for agents that field lookup-style queries against a document corpus (internal knowledge base search, product catalog Q&A, policy lookup) where no personalization or session continuity is required. The inflection point where you need L2 working memory is when the agent must plan across more than one turn or remember a user's prior stated preferences. Add L2 before adding L3 — the absence of working memory hurts more than the absence of archival retrieval.

The GPT-5.4 pricing model reinforces this: a 1M-token context window sounds like a solution to the memory problem, but prompts above the documented large-prompt threshold are billed at a higher rate for the session. Architectures that rely on context-window scale as a substitute for memory hierarchy will hit pricing cliffs at exactly the volume where they need reliability most.

Where hierarchical memory pays off in customer support and research agents

The two canonical use cases for hierarchical memory are per-account support agents and long-horizon research agents. Their memory requirements differ significantly by tier.

| Use case | Required L1 content | Required L2 content | Required L3 content | Primary retrieval pattern |

|---|---|---|---|---|

| Customer support | Current issue, account tier, open ticket | Prior resolution history (session), stated preferences | Account history (all sessions), product knowledge | Scoped by tenant ID; temporal ordering matters |

| Research agent | Current hypothesis, active sources | Research plan, cited claims, open questions | Literature archive, prior findings | Semantic similarity; multi-hop chaining |

| Coding assistant | Active file, current error | Project structure, design decisions | Code history, API docs | Hybrid: semantic + exact-match |

| Personalized assistant | Current request | Short-term task state | Long-term preferences, life context | Recency-weighted; preference consolidation |

Customer support agents need L3 scoped strictly to the account — a fact about one user must never surface in another user's context. Research agents need L3 to support multi-hop retrieval chains: finding paper A leads to citing author B leads to retrieving paper C. Mem0's architecture, designed explicitly for prolonged multi-session dialogues, aligns most directly with customer support continuity; its LOCOMO benchmark covers the temporal and multi-hop patterns that research agents require.

Production failure modes that break agent memory systems

Watch Out: The three most common failure modes in production agent memory systems are (1) stale memories that contradict updated user state — the agent recalls a preference the user changed six months ago; (2) prompt bloat from over-loading L1 — the agent retrieves too many L3 results and saturates the context budget, crowding out the actual task; and (3) cross-user memory contamination in multi-tenant deployments — one user's retrieved facts appear in another user's context due to absent or misconfigured namespace isolation. All three are silent failures: the agent produces a response, but the response is wrong.

"Fixed context windows pose fundamental challenges for maintaining consistency over prolonged multi-session dialogues," as Mem0's authors note — but the failure modes above are not window failures, they are architecture failures that any window size will fail to fix.

Stale or contradictory memories

Stale memories accumulate when the memory system stores facts as immutable records. A user changes their city; the old city record persists in L3 with a high utility score accumulated from prior sessions; the new city record scores lower on frequency because it was just written. The agent retrieves the wrong city.

The architectural fix is explicit versioning and invalidation: every L3 record can carry a created_at timestamp, an optional valid_until timestamp, and a superseded_by pointer when your implementation needs them. Treat this as a proposed schema, not a verified requirement. The retrieval path must filter out superseded records before scoring.

Pro Tip: Attach a confidence score and a

sourcelabel to every L3 record at write time. Facts derived from direct user statements should carry higher confidence and longer TTL than facts inferred by the model from conversation. When two records conflict and neither is explicitly superseded, surface both to the model with their confidence scores rather than silently arbitrating — the model can usually resolve the conflict given both options and ask the user to confirm.

Mem0's consolidation step — dynamically merging salient memories across sessions — is a write-time deduplication mechanism that partially addresses this, but it does not replace explicit invalidation for user-corrected facts.

Multi-tenant memory isolation

A memory system that serves more than a handful of users must enforce strict namespace isolation at the retrieval layer. Vector similarity search, by default, is global across the index. Without a tenant-scoped filter applied at query time, a retrieved memory from user A can appear in a query response for user B if the embeddings are similar enough.

Production Note: Implement isolation at the retrieval layer and in your data model as production guidance, not as an authoritative source claim. In Pinecone, namespaces are the usual mechanism; in pgvector, many teams add a

tenant_idcolumn and a composite index on(tenant_id, embedding). Second, enforce the tenant filter in every retrieval call as a hard pre-filter, not a post-filter on results. Post-filtering allows the ANN search to surface cross-tenant candidates before they are discarded; pre-filtering prevents cross-tenant candidates from entering the candidate set at all. For deployments beyond a few thousand tenants, evaluate whether a single shared index with namespace filtering or per-tenant dedicated index shards is more operationally sustainable — the latter trades management overhead for absolute isolation guarantees.

Agentic workflow state management in multi-tenant systems also requires that L2 working memory be scoped per session and per user: a LangGraph checkpoint store keyed by (user_id, session_id) prevents state bleed across concurrent user sessions on the same agent instance.

Framework reality check: A-MEM, Mem0, and adjacent prototypes

The agent memory framework space in 2026 is still early-stage. Most implementations are research prototypes or thin wrappers over vector databases. Only Mem0 has published a peer-reviewed benchmark with verifiable numbers under a standard evaluation harness.

| Framework | Maturity | Extensibility | Verified benchmark | Scale limits | Verdict |

|---|---|---|---|---|---|

| Mem0 | Production-ready (per authors) | High — pluggable backends, custom extractors | LOCOMO: +5/11/7% QA, >91% latency reduction, >90% token savings (arXiv:2504.19413) | Not officially published per-tenant scaling limits | Most evidence-backed option in this article's source set |

| A-MEM | Research prototype | Moderate — zettelkasten-style note graph | No peer-reviewed benchmark retrieved | Unknown at scale | Interesting architecture; not production-validated |

| LangGraph state | Stable | High — integrates with any Python backend | No memory-specific benchmark; checkpoint latency depends on backend | Scales with checkpoint store (Redis, Postgres) | Solid L2 working memory; not a full memory hierarchy solution |

| Custom pgvector | As mature as your implementation | Unlimited | No published benchmark; depends on implementation | Postgres scaling limits apply | Viable L3 backend; requires you to build L1/L2 yourself |

The honest assessment: no framework in the current ecosystem provides a fully implemented, production-validated three-tier hierarchy with demand paging and eviction policies out of the box. Mem0 comes closest on the archival retrieval and consolidation side. LangGraph provides the scaffolding for L2 state management. Engineers building production systems will need to compose these tools and implement eviction and promotion logic themselves, using the policy specification in this article as a starting point.

FAQ

Bottom Line: Agent memory is not just RAG. RAG is the L3 retrieval mechanism; real agent memory also needs L2 working memory for task state and an eviction/promotion policy that keeps continuity intact across turns and sessions.

What is the memory hierarchy in LLMs?

The memory hierarchy maps agent storage to three tiers analogous to CPU cache: L1 (the active prompt context — high cost, small capacity, re-processed on every call), L2 (session working memory — structured task state and scratchpad that persists across turns but lives outside the prompt by default), and L3 (vector-based long-term archival retrieval — facts and preferences that persist across sessions and are loaded on demand).

How does demand paging work in AI agents?

The agent starts each turn with a minimal L1 containing only the current task state and active instructions. When the agent detects a knowledge gap — a missing fact, a missing plan, a missing user preference — it issues a retrieval call to L3. The retrieved record is loaded into L1 for the current turn. At turn end, non-sticky content is demoted back to L2 or L3. Nothing is preloaded speculatively.

What is the difference between RAG and agent memory?

Bottom Line: RAG is a retrieval mechanism — it fetches semantically similar documents from a vector store and injects them into a prompt. Agent memory is a state management system — it tracks what the agent knows, what it is doing, what it has decided, and what it must remember across sessions. RAG is one component of L3 retrieval. It is not a substitute for L2 working memory or for the eviction and promotion rules that govern the full hierarchy. An agent that only does RAG has no session continuity, no plan state, and no mechanism to update or invalidate stale facts.

Which eviction policy is best for long-term agent memory?

No single policy dominates. LRU is the pragmatic default for L2 session state. LFU is more appropriate for L3 preference stores where frequently accessed facts should outlive inactive ones. Semantic eviction — scoring candidates against the current task embedding — produces the best precision for long-horizon multi-domain agents but carries higher compute cost. In practice, a hybrid utility function combining recency, frequency, and semantic similarity (as defined in the promotion/demotion section above) outperforms any single policy.

How do you keep context across sessions in an LLM agent?

L2 compacted state (a structured JSON object representing the agent's goals, decisions, and open tasks) is persisted to a durable store (Postgres, Redis) at session end and reloaded at session start. L3 long-term facts are written to a vector store with tenant-scoped namespacing and retrieved on demand. The combination means the agent resumes with its plan intact (L2) and can retrieve specific historical facts as needed (L3) without reconstructing the entire conversation history.

Sources & References

Production Note: The primary benchmark source for this article is the Mem0 paper (arXiv:2504.19413), which provides the only peer-reviewed, numerically verifiable results on hierarchical agent memory under the LOCOMO benchmark as of this writing. OpenAI GPT-5.4 pricing and context-window specifications are drawn from official OpenAI documentation and are subject to change.

- Mem0: Building Production-Ready AI Agents with Scalable Long-Term Memory (arXiv:2504.19413) — Primary benchmark source; LOCOMO evaluation, latency and token-cost results

- GPT-5.4 Model Launch — OpenAI — 1M-token context window announcement and positioning

- GPT-5.4 API Documentation — OpenAI — Context window limits and pricing tier structure

- pgvector — Open-source vector similarity for Postgres — L3 vector backend reference

- Pinecone Vector Database — Managed vector store; L3 backend with namespace isolation

- FAISS — Facebook AI Similarity Search — Open-source ANN library for L3 retrieval

Keywords: A-MEM, Mem0, LangGraph, Llama 3.1, OpenAI Responses API, vector database, RAG, LRU, LFU, semantic caching, embedding model, pgvector, Pinecone, FAISS, arXiv:2603.10062